Issue 01 - SHIFT and DRIFT: The Case for Algorithmic Surveillance

Anne E. Burnley, MD, MHS, MS | The Epidemiology of Algorithms | Issue 01

WHAT DO AI SYSTEMS AND INFLUENZA VIRUSES HAVE IN COMMON?

They both shift and drift, and we have built a global surveillance system for only one of them.

THE EXPOSURE

I asked my new Occupational Health Resident this question as a “joke” in the clinic a few weeks ago. “What do AI systems and influenza viruses have in common?” I had just taken a course on “AI in Healthcare,” and he had spent a year studying epidemiology for an MPH. I could see the wheels turning in his head, possibly wondering what he was missing or if it was a trick question, after all, we had just met. After a minute or so, he said, “I have no clue. What do they have in common?” Excitedly, I said, “They both shift and drift.” We both went quiet, the specific kind of quiet that happens when something meant as a joke turns out to be true.

Every clinician reading this has encountered a clinical decision support tool. An early warning score. A sepsis alert. A diagnostic algorithm. You’ve learned, over time, how much trust to put in each. You’ve calibrated your response. You’ve built a mental model of when it’s right and when it goes off randomly.

Here is what you were almost certainly never told: The mental model you so carefully curated over the years may no longer be valid.

The algorithm you use today may not be the algorithm you were trained on. It may have been silently updated. Its underlying model may have drifted as the population it was trained on diverged from the population you’re now treating. No alarm fired. No notification was sent. The interface looks identical. But something changed, and your calibration, built on the old version, is now obsolete.

THE SIGNAL

Antigenic shift and drift: A borrowed framework that just fits.

In virology, antigenic drift is the slow, incremental change in the surface proteins of viruses, especially influenza viruses, over time. These changes due to mutations are small, and the immune system, trained on a previous version of the virus, still recognizes it, but less effectively. Protection erodes gradually, invisibly, until it fails when it matters.

Antigenic shift is different. It is a sudden, discontinuous change — a reassortment of genetic material that produces a novel viral strain the immune system has never encountered. No prior immunity. No warning. The 1918 influenza pandemic and the 2009 H1N1 pandemic were significant events. In contrast, the 2009 COVID-19 pandemic was caused by a new virus, SARS-CoV-2, which was new to human populations.

The algorithmic parallels:

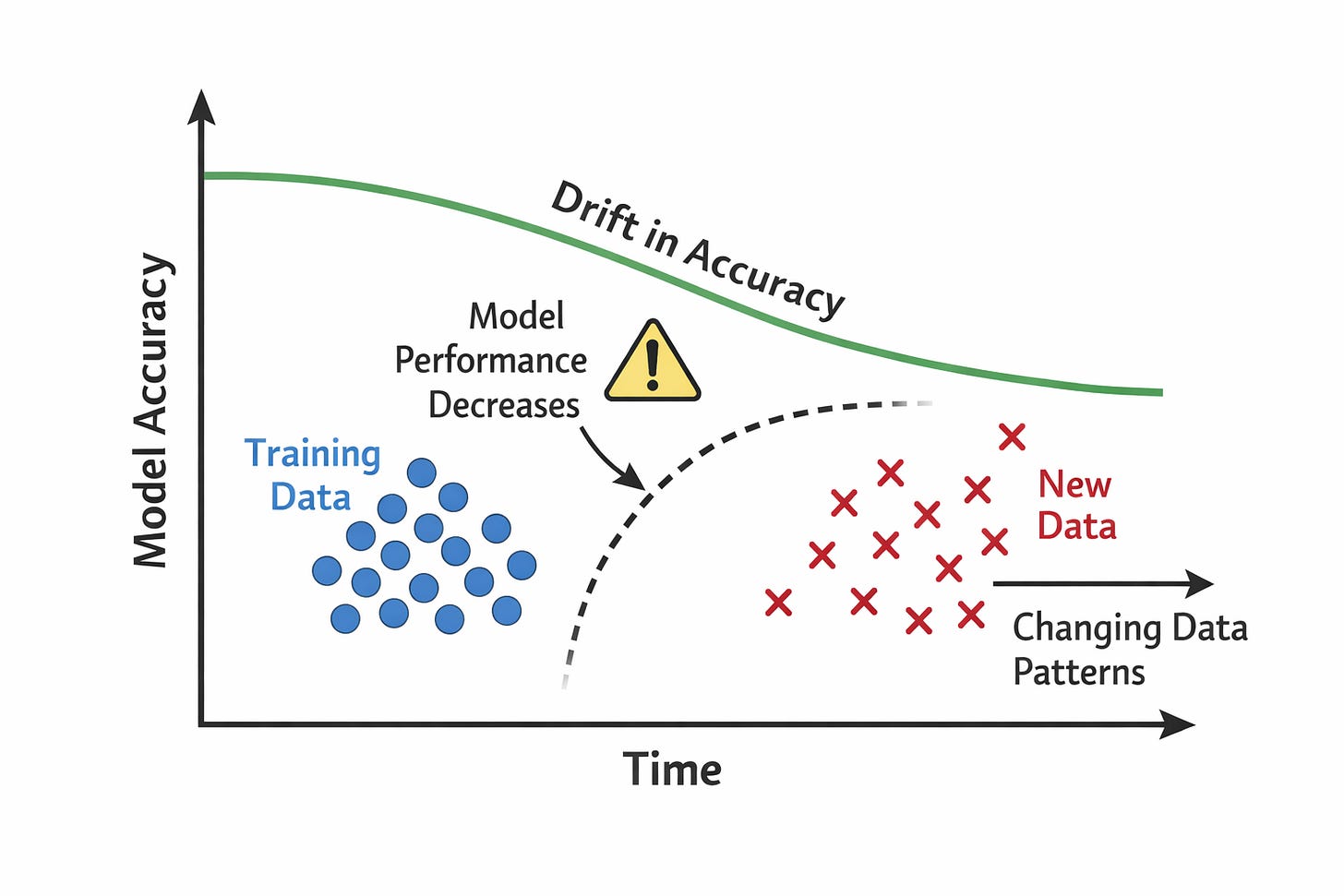

Algorithmic drift, or model drift, is the gradual decline in an algorithm’s performance over time. It happens when the data used to train the model diverges from the data it encounters in the real world, such as changes in the patient population or clinical practices.

The model still runs normally, and no error messages appear. Because the system looks the same, clinicians continue to use and trust it at the same rate as before.

However, as the gap between the training data and current conditions grows, the model’s predictions may become less accurate. Performance slowly erodes without immediate notice.

An algorithmic shift is a sudden, major change in how an algorithm behaves. It can happen when a system receives a major version update, a new vendor system is adopted, or a retrained model is deployed overnight.

Unlike gradual changes, an algorithmic shift is discontinuous. The system’s behavior can change immediately and significantly, leaving clinicians and users without time to adapt their expectations or update their clinical intuition.

Because these changes can occur without clear notice, there may be little “memory” of how the previous system behaved and no routine surveillance to detect what changed. As a result, decisions may be influenced by a tool that now behaves differently from the one users learned to trust.

The analogy is not merely poetic. It is structurally precise. In both cases, the change is invisible at the individual level; the harm is distributed across a population. Early detection requires systematic surveillance, and no single clinician can see the patterns within their own practice.

Algorithmic drift in clinical settings can only be detected by watching a population, which is, by definition, epidemiology or the study of disease distribution, causes, and control in populations.

THE EVIDENCE

We built a global surveillance system for influenza, but have almost nothing for algorithms in healthcare settings.

The World Health Organization’s Global Influenza Surveillance and Response System coordinates sentinel sites across 114 countries. It sequences circulating strains continuously. It detects drift in real time. It produces annual vaccine composition recommendations based on that surveillance. The entire architecture exists because we recognized after 1918 that a pathogen that shifts and drifts requires ongoing population-level monitoring, not just point-of-care response.

Now consider what exists for clinical AI. Most health systems have no formal process for detecting performance degradation in deployed algorithms. Vendors are not required to report model updates to clinical users. There is no equivalent of strain sequencing, no systematic comparison of how an algorithm behaves now versus six months ago. When a sepsis model starts missing more cases than before, no alarm goes off. The signal is buried in individual clinical outcomes, invisible without aggregation.

The gap in numbers:

The FDA has cleared over 700 AI/ML-based medical devices. Post-market surveillance requirements for algorithmic performance degradation are minimal. There is no mandatory registry of algorithm adverse events. There is no equivalent of MedWatch for clinical AI. We are literally flying with instruments we do not calibrate.

This is not an argument against clinical AI, just as influenza surveillance is not an argument against the influenza vaccine. It is the science that makes the vaccine work, because without surveillance, you cannot know what you are vaccinating against.

The Epidemiology of Algorithms is not a critique of AI in medicine or healthcare in general. It is the science that makes AI in medicine safe.

OPEN QUESTION

What would algorithmic surveillance actually look like?

If we take the influenza analogy seriously, the architecture almost writes itself: sentinel sites — hospitals that systematically monitor algorithm performance against outcomes. Strain sequencing: Version-control systems that track changes between model iterations. Signal detection: Statistical methods that distinguish random variation from true performance degradation. Governance: Who acts when a signal is detected, and how fast.

None of this exists at scale for algorithms. Building it is the work of this discipline. In the next issue, I will introduce the framework I have been developing, the five-component architecture for population-level algorithmic surveillance, and why the classical epidemiological triad of agent, host, and environment maps more precisely onto clinical AI than anything currently proposed in health informatics.

For now, I leave you with the question I want you to carry into your next clinical shift:

“Do you know if the algorithm you used today is the same algorithm you used six months ago?”

If you cannot answer that question with certainty, you are practicing in a surveillance gap. That gap now has a name, and closing it is why this newsletter exists.

The Epidemiology of Algorithms

Anne E. Burnley, MD, MHS, MS

Founder & Writer